brainrender#

See brainrender Quickstart for an example of how to create and render scenes.

Introduction#

A core design goal is to facilitate the rendering of any data registered to a reference atlas. To this end,

brainrender facilitates the creation of 3D objects from many different types of data (e.g. cell locations,

brain regions) within minimal need for the development of dedicated code. In addition, brainrender is fully

integrated with the BrainGlobe Atlas API ensuring that you can use brainrender

with any atlas supported by the API with no need for any changes in your code.

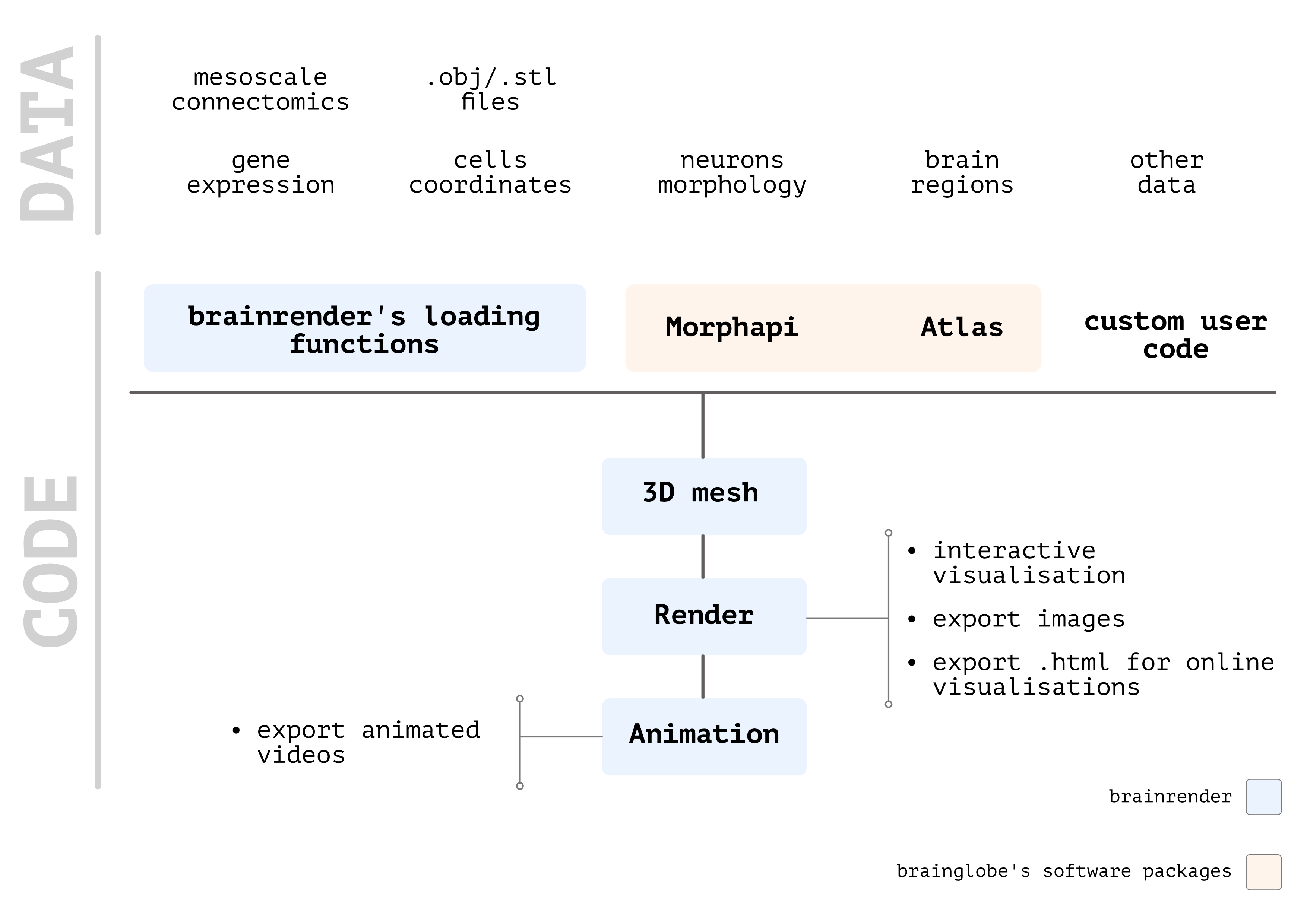

Overview of brainrender’s workflow

Overview of brainrender’s workflow

The general workflow for any brainrender visualization consists of just a few steps:

Load your data and generate a

brainrenderActor. This can be done using custom code, or with the dedicatedActorclasses provided bybrainrenderwhich can be used to render most types of data.Add your data to a

brainrenderSceneRender your scene, or use it to create animated videos.

To learn more in detail how to use brainrender, keep reading this documentation and when you’re ready check out the

examples at the GitHub repository.

Using Notebooks#

brainrender can be used with Jupyter notebooks, but some care must be used when doing that.

Find more details here.

Full documentation#

Citation#

If you find brainrender useful and use it in your research, please let us know and also cite the paper:

Claudi, F., Tyson, A. L., Petrucco, L., Margrie, T.W., Portugues, R., Branco, T. (2021) “Visualizing anatomically registered data with Brainrender” eLife 2021;10:e65751 doi.org/10.7554/eLife.65751